Blake Smith

Cyborgs Old & New

The Handover: How We Gave Control of Our Lives to Corporations, States and AIs

By David Runciman

Profile 336pp £20

Artificial intelligence, it is commonly acknowledged, will pose one of the gravest challenges to humanity in the coming years. In the minds of some, it is already the most urgent problem we face. While there are a number of possible dangers that might bring about the extinction of our species, AI confronts us with a particularly dire situation, because it may well be that we have only a brief amount of time – perhaps a generation – in which to set up norms and constraints on the development of autonomous, non-human intelligences that may otherwise escape our control.

Like the peril of nuclear war, which we have managed to live with despite the enormous irresponsibility of the nuclear-armed superpowers (even now engaged in a proxy war in Ukraine), AI is a danger we ourselves have created. As with climate change, it requires collective action; indeed an unprecedented degree of global political coordination will be needed in the immediate future if its growing risk is to be contained. Unlike these other menaces, however, AI may not simply extinguish humanity, in the manner of a climate or nuclear disaster, but supplant us.

For secular, modern people accustomed to imagining the human mind as the centre, measure and lever of the universe, it is a brutal shock even to imagine facing a non-human entity that is smarter and more powerful than we are, and perhaps indifferent or even hostile to us. According to some forecasters, AI will forcibly return us, as it were, to the intellectual position of religious believers who must try to understand, manipulate, propitiate or serve non-human forces that surpass us in every respect.

From Bruno Latour to Sam Kriss, contemporary theorists and commentators contemplating the digital revolution and the rise of networked, algorithmic forms of ‘thinking’ that take place outside the human brain have spoken of these phenomena in terms that recall premodern world views. To cite the science fiction writer Arthur C Clarke’s memorable phrase, AI may eventually be ‘indistinguishable from magic’, engendering the sort of superstitious thinking that modern enlighteners – whose ideas and inventions have led us, ironically, to this state of affairs – meant to banish from the world.

David Runciman, however, in his latest book, The Handover, makes salutary arguments against such an understanding of the challenge of AI. He insists that modernity (in other words, the past few centuries) has been defined by the rise and dominance of two ‘artificial intelligences’: the state and the corporation. They offer a model for making sense of AI and they will be, in the coming years, our best means of restraining it.

We do not typically think in our everyday interactions with them of states and corporations as vast, powerful, long-lived, non-human ‘intelligences’. But, as Runciman rightly reminds us, the premier theorist of the modern state, Thomas Hobbes, explicitly conceived of it in such terms. A long intellectual tradition, likewise, has emphasised that states and corporations, organised alike through bureaucratic forms that restrict the personal spontaneity of their human components via techniques of abstraction and routinisation, possess peculiar modes of ‘thinking’ that are not quite comparable to the way individual people think. Theorists like Emile Durkheim, Max Weber and Michel Foucault registered, with different degrees of admiration and horror, their recognition that the modern era had witnessed the almost total subsuming of human life, at least in the developed world, into forms imposed on it by these artificial intelligences.

Today’s well-justified fears about AI, Runciman cogently argues, should not blind us to the many ways in which humanity has already transformed itself over the past few centuries, outsourcing much of its decision-making to large, opaque, impersonal entities that dominate our political, economic and indeed personal lives. There is a great deal of frustration, at all levels of our societies, with the power of states and corporations and with the fundamental difference between their abstract, algorithmic forms of ‘thinking’ and our own human horizons, which they often seem, with terrible indifference, to ignore.

In the academy, for example, thinkers like James C Scott, from an anarcho-left perspective, and Milton Friedman, from a libertarian one, have claimed that the state’s peculiar ways of thinking are inimical to human flourishing and that its projects to promote our welfare inevitably backfire. In electoral politics, Runciman notes, throughout the West voters increasingly favour supposedly charismatic politicians, who offer a sort of dubious reassurance that the impersonal mechanisms of the state (itself, these past few decades, acting largely in the service of that other inhuman, abstract decision-maker, the ‘market’) are at least overseen by someone like us.

AI will exacerbate these tendencies. It has already increased the ability of states, particularly the United States and China, to surveil their citizens, and the ability of corporations, such as Meta and Google, to supply states with data and to manipulate consumers for their own ends. Soon it may even undermine rather than augment the power of states and corporations, becoming self-directing, able to act on its own initiative and, still more ominously, capable of increasing its own ‘intelligence’. Because of this danger, humanity must, Runciman warns, work quickly, while AI is still potentially subject to constraint, to corral its potential for runaway, autonomous growth.

Humanity, however, is not a coherent political actor, as Runciman reminds us. We are not squaring off with AI in a one-on-one battle pitting our species against it. Human beings are already entangled with artificial intelligences, in the form of the state and corporations. Our relationship with AI will be no less complicated.

Runciman compellingly places AI in a broad historical context, making it appear, if not less terrifying, then at any rate less radically new than newspaper columnists often breathlessly characterise it. The crux of the problem is precisely that in order to have a chance of living decently with AI we will have to fix our relationship with those earlier iterations of artificial intelligence, the state and the corporation, which are currently both using AI in ways that undermine our freedom and look unlikely to contain its potential to escape human control forever.

We have, therefore, a double task, one that The Handover has the great merit of clarifying. We must restore the conditions for rational collective action in the political realm. Losing faith with the bureaucratic machinery of the state or the invisible hand of the market, many are turning to what will prove to be the disappointing fantasies presented by the charismatic populist or the tech CEO with Caesarean ambitions as a way of reintroducing a ‘human’ element to our lives. Rejecting that false choice, Runciman confronts us with our responsibility to rehabilitate existing forms of political and economic decision-making before it is too late.

Sign Up to our newsletter

Receive free articles, highlights from the archive, news, details of prizes, and much more.@Lit_Review

Follow Literary Review on Twitter

Twitter Feed

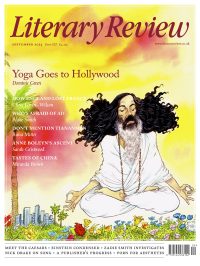

Knowledge of Sufism increased markedly with the publication in 1964 of The Sufis, by Idries Shah. Nowadays his writings, much like his father’s, are dismissed for their Orientalism and inaccuracy.

@fitzmorrissey investigates who the Shahs really were.

Fitzroy Morrissey - Sufism Goes West

Fitzroy Morrissey: Sufism Goes West - Empire’s Son, Empire’s Orphan: The Fantastical Lives of Ikbal and Idries Shah by Nile Green

literaryreview.co.uk

Rats have plagued cities for centuries. But in Baltimore, researchers alighted on one surprising solution to the problem of rat infestation: more rats.

@WillWiles looks at what lessons can be learned from rat ecosystems – for both rats and humans.

Will Wiles - Puss Gets the Boot

Will Wiles: Puss Gets the Boot - Rat City: Overcrowding and Urban Derangement in the Rodent Universes of John B ...

literaryreview.co.uk

Twisters features destructive tempests and blockbuster action sequences.

@JonathanRomney asks what the real danger is in Lee Isaac Chung's disaster movie.

https://literaryreview.co.uk/eyes-of-the-storm